We’ve been busy over the last few months, and the blog posts haven’t been flowing as expected, but that’s because exciting things have been taking place in the background. Our network is growing and there’s increasing interest in our developments. We’re about to start looking for the right investor to partner with to take our flagship prototype to market. SkyDog is a UAV payload for detecting, classifying and geotagging animals with any off-the-shelf UAV. More on that soon, but firstly I want to continue this development blog and provide context of the problem we set out to solve.

The brief was for a UAV that could detect, classify, geotag, and track (if necessary) an animal for tactical and strategic land oversight. This is for farmers, pest-controllers, hunters, and wildlife researchers who all have different interests in knowing where different animals are on their property.

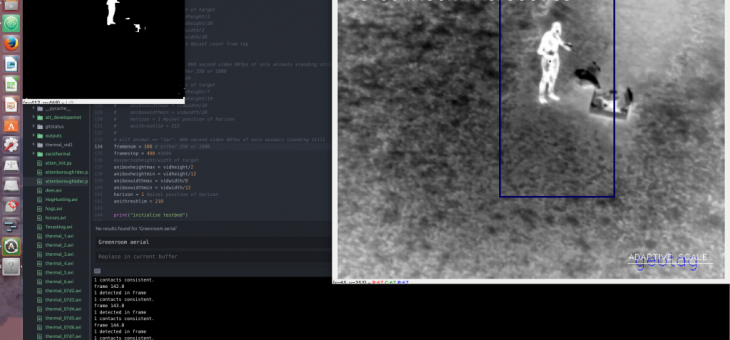

We decided to develop a payload which could be attached to any off-the-shelf drone in order to make life easiest for our customers. The payload has a FLIR Boson thermal camera to pick out animals at night, combined with a microprocessor running custom Computer Vision, Machine Learning (ML), and ‘backseat driver’ Artificial Intelligence (AI) to process the thermal video feed and enable adaptive maneuvering through the flight controller while providing real-time updates of the geotagged locations of animals to the operator. Nothing existed in the market, nor in the academic research papers we read. This means it was technically going to be a particularly tricky problem to solve.

So how did we do this? Usually, with any robotics or Computer Vision (CV) problem, the first thing to do is see how other people have solved the problem that you want to solve, or at least find the solution to something similar. In our case however, nobody has achieved anything close to what we were aiming for – or at least, they weren’t sharing it online. There was nothing beyond the basic CV tracking functions which were embedded in off-the-shelf UAV. These functions use various CV techniques to follow a set of feature points across frames in order to provide limited tracking capability. Some libraries automatically identify humans, or faces at least, though there is nothing out there yet for animals.

The thing is that the strong tracking functions all rely on knowing what you want to track in the first place. For us, the whole point of our payload software is to find an animal, and then determine whether or not it is worth tracking! And this from a UAV with changing heights, angles, and speed, in an environment with changing temperatures and terrain, looking for animals with a range of sizes, orientations, body temperatures. Are they in herds or are they lying down under foliage? There were a lot of variables in play.

Which is why we needed the thermal camera to at least give us an edge. The number of variables also meant we needed an integrated approach to developing our AI: The CV would detect a possible target, feed it into the ML for classification, then if it was what we were looking for, would Geotag and pass the confirmed target along to the users land management software. If required the CV would enable the UAV to track the target, (circle it for more footage, etc) according to the users aims.

I think we’ve ended up with the simplest solution (which is of course the best, but never easy to see until it’s done). And I think that’s why we’ve had success where not many others have achieved what we’re going for. But I’ll leave it at that for the moment, and let’s get more technical in the next post. Might even touch on some of the tricks to take CV to the level of adaptive thresh-holding for a camera embedded on a UAV tracking moving targets across a changing terrain.